Virtualisation of the Chemnitz Opera House Part 2: Post-modelling and optimisation

To realise live events in virtual reality, 3D models of event spaces have to be created. In order for smaller cultural institutions to be able to benefit from this in the future, the effort required to create virtual event locations must be at a minimum. We have experimented with different methods for virtualising a large opera hall and present our experiences to you.

In the first step, we were able to create a geometrically correct image of the Chemnitz opera hall in a short time using photogrammetry. However, the model does not have the optical quality and compression to be used in virtual applications. In a further step, the model must be optimised, which is currently only possible by hand. The focus is on reducing optical noise and image errors, optimising performance, texturing the surfaces and re-modeling the seats.

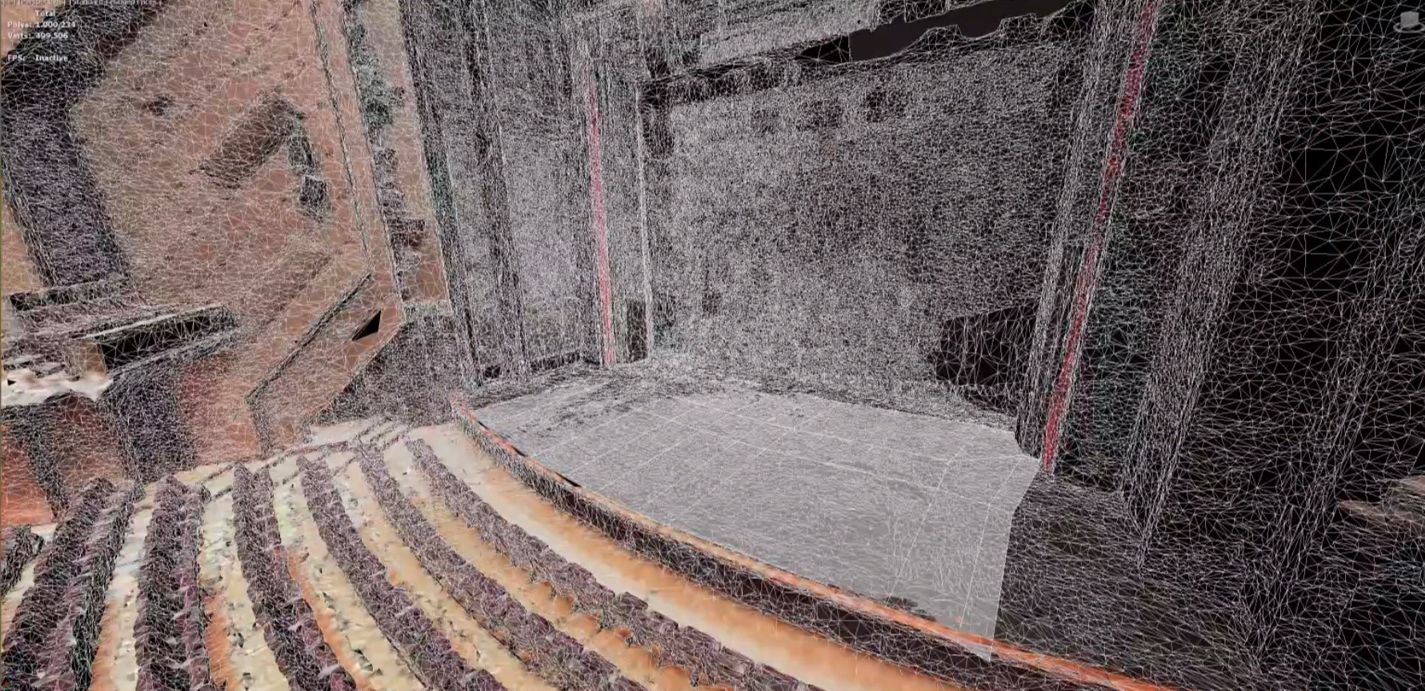

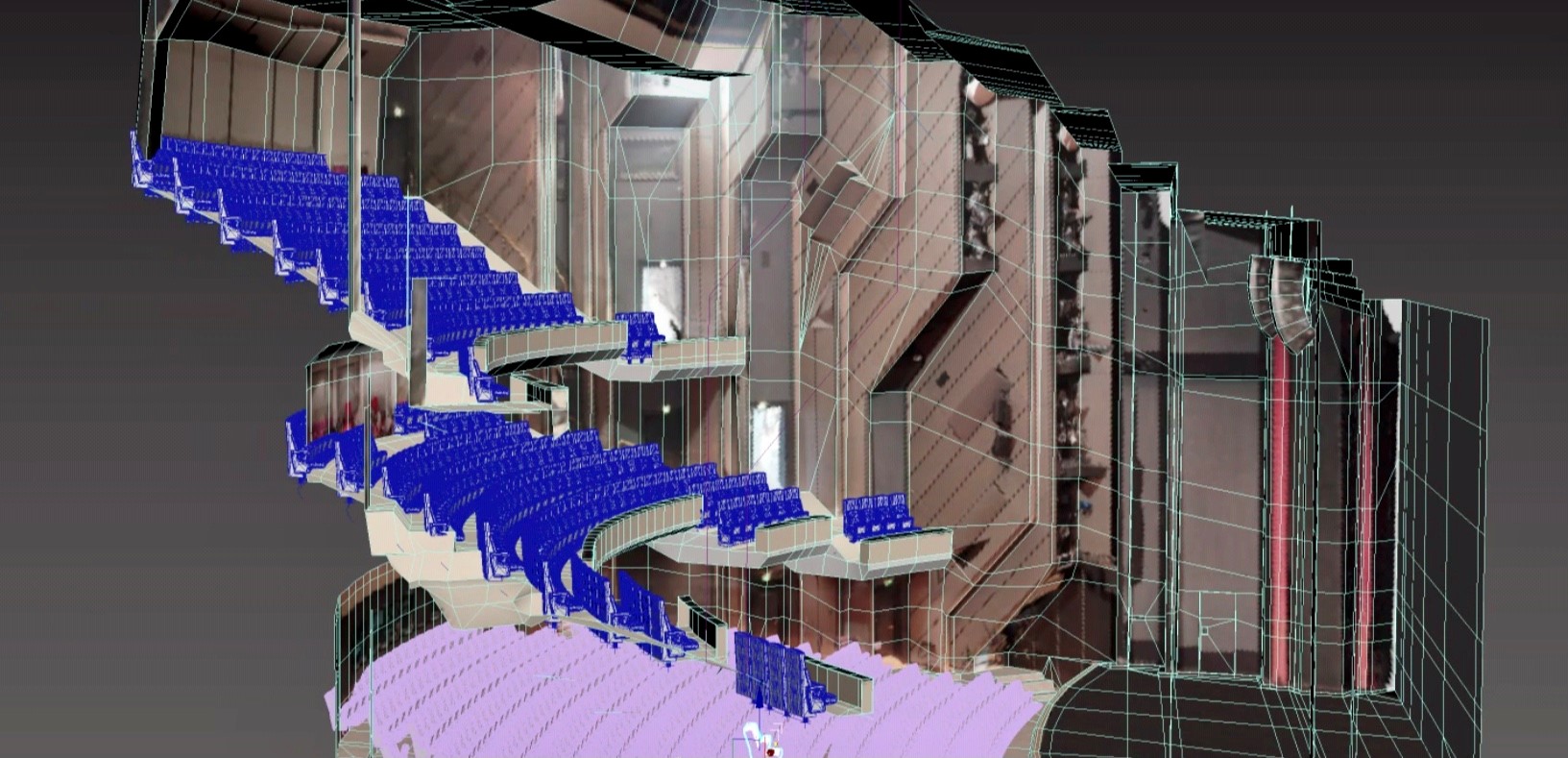

3D model and 3D mesh of the Chemnitz Opera House based on photogrammetry; the model contains too many polygons for a performande rendering in VR and has various artifacts and optical noise despite high detail quality

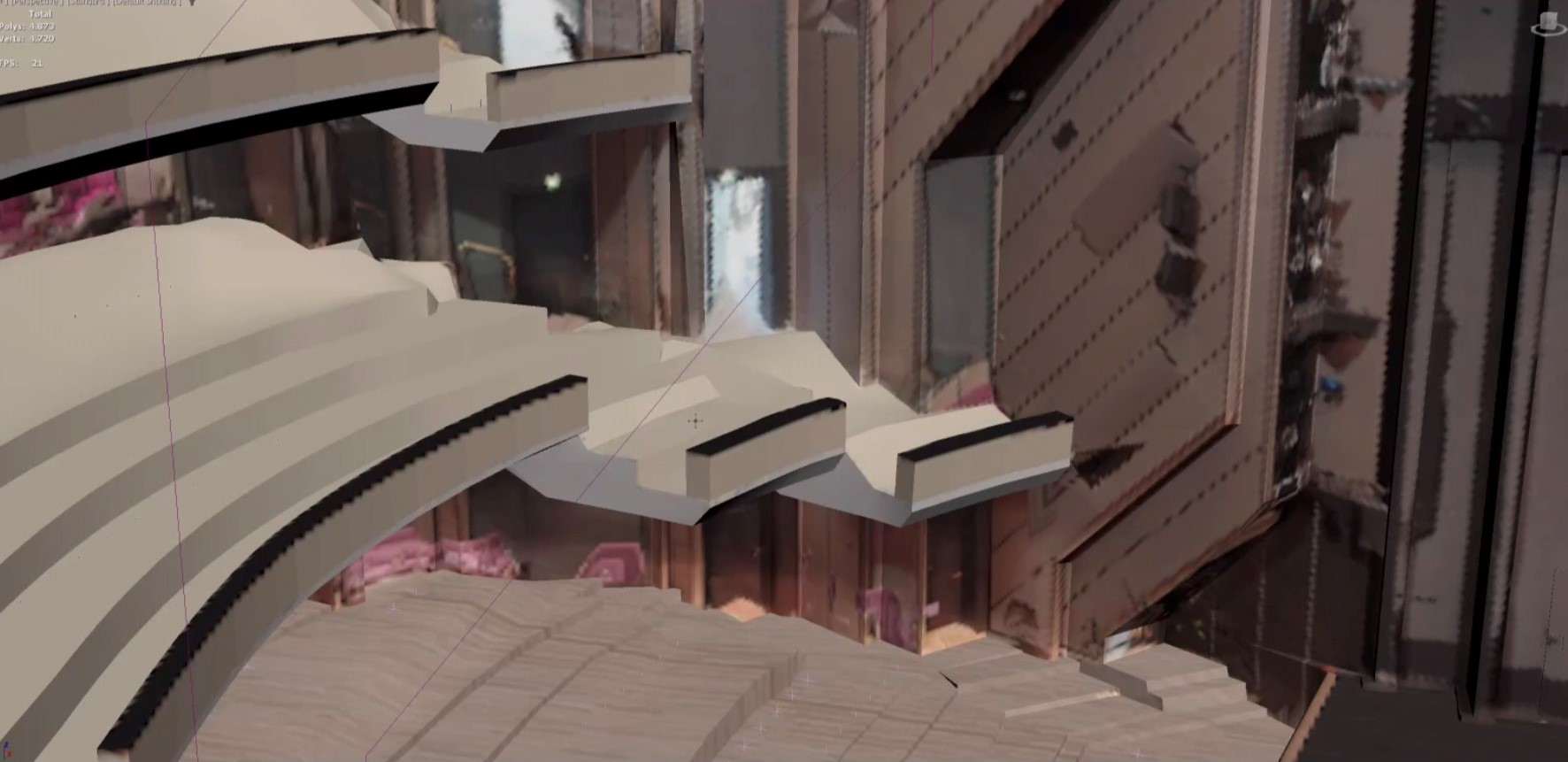

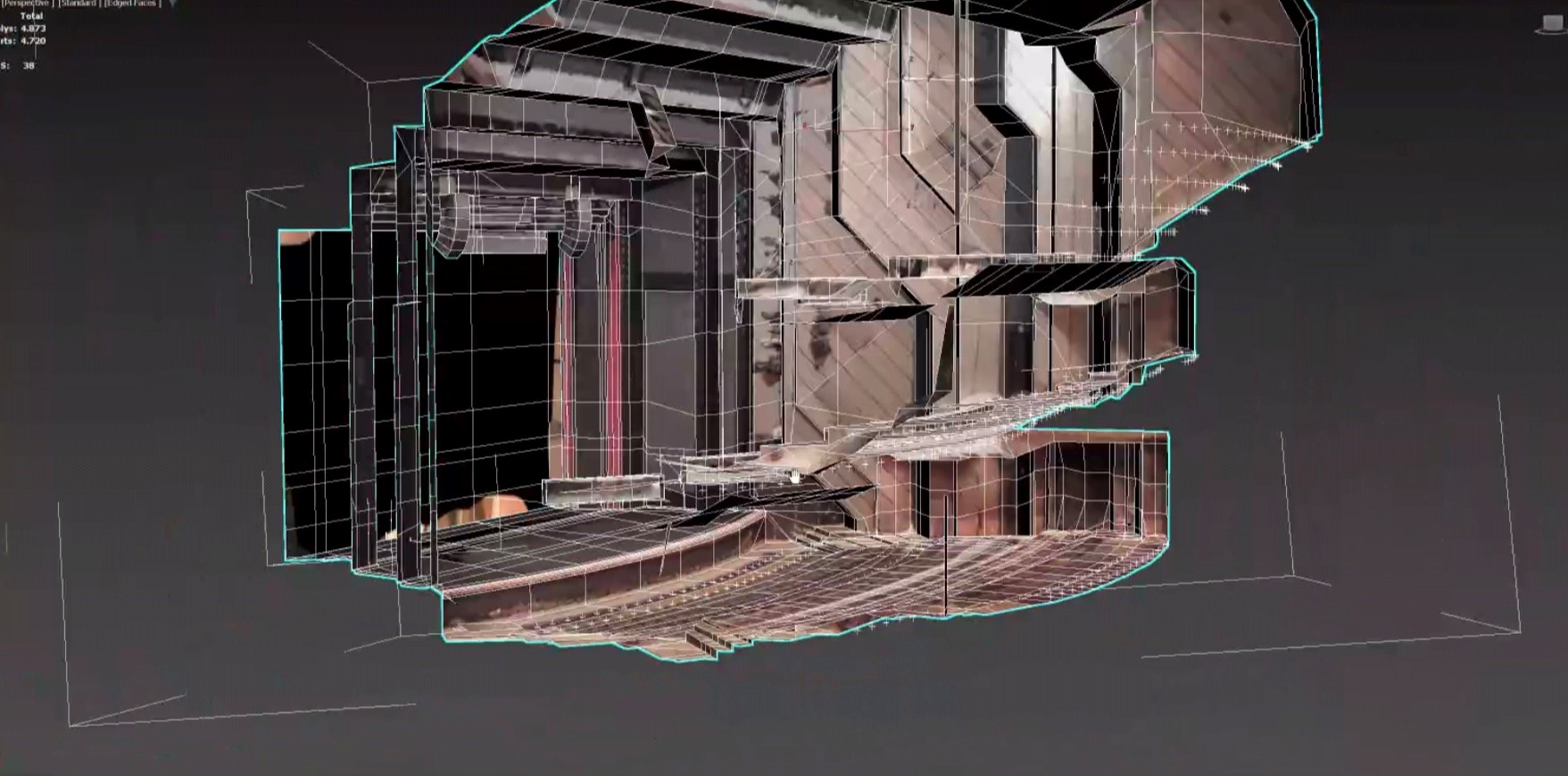

Model with remodeled elements and significantly reduced number of polygons

Optical noise reduction and performance optimisation

The model obtained from photogrammetry has more than one million polygons. Especially in the case of smooth surfaces, unnecessary geometries are built in due to the inaccurate depth estimation, which visually significantly reduces the immersion. Automated optimisation methods are not yet able to solve this problem satisfactorily. Therefore, manual reconstruction is necessary, especially for smooth geometries. By overlaying in a 3D modelling software, the geometries can be easily redrawn on the basis of the existing model. After processing, the optimised model contains only 4873 polygons.

Texturing of the surfaces

In addition to clean geometries, high-quality textures are particularly important for a high level of immersion. The surfaces obtained from the photogrammetrically derived model are only of limited use for this. Reflections and uneven illumination create irregular and unnatural copies. Databases are available for subsequent texturing. However, the textures obtained from photogrammetry can also be retouched or completely new textures can be artificially created using the modeling software.

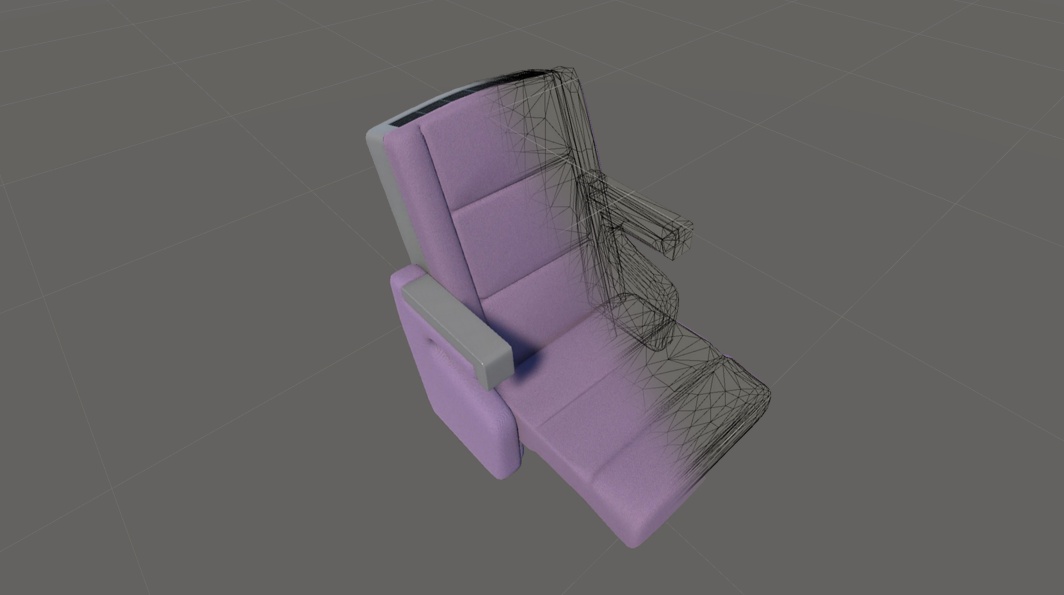

Re-modeling of the seats and automated seating

In the case of the opera house, the seating is a special circumstance. Due to the geometric nature, such as covers, and their number, they cannot be automatically and correctly recorded by photogrammetry. In addition, the 714 seats are identical in each case. For performance reasons, it is therefore necessary to virtualise them once and then duplicate them in a virtual application. Thus, instead of 714 times, the seats exist only once in the memory of the graphics card, which significantly increases performance. Automatic placement also makes it possible to adjust the seating individually depending on the scenario. Further, this segmentation also helps to realise the functions of a virtual audience.

Redesigned seats and automated distribution in 3D models of the opera house

How efficient is the workflow and does it stand in the way of a democratisation of virtual culture?

Depending on the use case, virtual copies of real event locations can be a relevant option for implementing virtually extended events in the future. The described and optimised process chain for the creation of a virtual twin that is optimised for virtual applications could be implemented in the presented example with a labour input of about 15 person days. With repeated implementation, empirical values and optimised workflows can lead to a further reduction in labour input. The one-time expense is thus manageable for event venues. Even small theatres have relatively large budgets due to their personnel-intensive business operations. If virtual extensions should represent possible business models in the future within the framework of Metaverse developments, at least the creation of virtual event locations does not represent an obstacle to this.

Are AI developments able to optimise this process chain in the future?

Currently, many AI-based photogrammetry solutions are developing, such as Neuronal Radiance Fields (NeRF). Based on only a small amount of image material, virtual images are generated very quickly with partially impressive visual results. The idea of using a mobile phone camera to create a usable virtual twin in real time is extremely charming. However, especially in the present case of the complex nature and size of such event locations, manual touch-ups will probably remain indispensable. The workflow presented here can certainly be further optimised with the help of AI.